Difference Between Camera and Eye: Understanding Capture and Perception

Explore the difference between camera and eye, comparing light capture, processing, and perception. A detailed, analytical guide for photographers and vision enthusiasts.

At a high level, the difference between camera and eye lies in how light is captured and interpreted. A camera uses a fixed optical path and a digital sensor, translating photons into color data. The eye relies on a flexible lens, a dynamic pupil, and neural processing to continuously adapt, interpret, and perceive depth, motion, and color. Understanding this difference helps photographers design better lighting and exposure strategies. The camera view emphasizes repeatability and control, while the eye emphasizes context, attention, and experience. This contrasts informs everyday decisions in exposure, focus, and composition.

The difference between camera and eye in practice

The difference between camera and eye is not merely about hardware; it reflects two systems built to translate light into meaning. A camera uses a fixed optical path and a digital sensor, translating photons into color data. The eye relies on a flexible lens, a dynamic pupil, and neural processing to continuously adapt, interpret, and perceive depth, motion, and color. According to Best Camera Tips, understanding this difference helps photographers design better lighting and exposure strategies. The camera view is engineered for repeatable results, while the eye evolves with context, attention, and experience. Readers will appreciate how this distinction informs everyday photography decisions, from exposure to composition. By framing the difference between camera and eye as a conversation between sensor physics and neural perception, beginners can approach shots with a clearer set of priorities. The eye excels at continuous adaptation, dynamic range, and real time context, while the camera excels at control, repeatability, and documentation. Throughout this article you will see how each system handles scenes, and how to translate eye behavior into camera practice for better results.

How the eye perceives light vs how a camera captures light

The eye perceives light through the retina with photoreceptors arranged in a nonuniform grid, delivering a bright center with a high density of cones and a periphery dominated by rods in low light. The brain constructs color and brightness by integrating signals over time, using context and experience to fill gaps. A camera, by contrast, captures light with a sensor that converts photons into electrons, then processes color through Bayer filters and white balance. The subject matter and lighting influence exposures; the eye continuously adjusts pupil size and lens shape to optimize brightness. A practical takeaway is that the eye uses dynamic adaptation to preserve detail across luminance ranges, while a camera relies on exposure settings and post processing to maximize detail. When you analyze the difference between camera and eye, consider how sensor gamma curves interact with scene contrast and how the brain weights central vision versus peripheral cues. This section outlines the core distinctions that drive everyday photography and visual perception.

Focusing systems: accommodation vs autofocus

The eye focuses by changing lens curvature through accommodation, a rapid yet fluid process that keeps objects sharp as they move. In cameras, focusing is achieved through autofocus or manual focus, using sensors to detect contrast or phase differences. The difference between camera and eye here is not only speed but how depth is interpreted. While the eye models depth using stereopsis and perspective, the camera relies on depth of field, focal length, and sensor size. In practice, photographers manage focus by selecting AF points or manual focus, then adjust aperture to control depth of field. Understanding the interaction helps to design creative strategies, for example selecting a longer focal length to isolate subjects while the eye would naturally focus widely in a scene with multiple cues. The Best Camera Tips approach emphasizes testing how your camera responds to moving subjects and how your eye tracks motion to remain engaged with the subject. The result is a balanced technique that respects both systems.

Exposure and dynamic range: adaptation vs sensor limitations

Exposure is the bridge between eye perception and camera capture. The eye adapts rapidly to lighting by adjusting pupil size and neural gain, maintaining apparent brightness across a wide range. A camera, however, is bounded by sensor sensitivity, ISO performance, and the dynamic range of the capture pipeline. The difference between camera and eye becomes most evident in high-contrast scenes: the eye can bias attention to the subject and compress or expand perceived brightness more flexibly, while the camera may require metering strategies, exposure compensation, or HDR techniques. Understanding these dynamics allows photographers to bracket thoughtfully, harness shadows and highlights, and design scenes that the camera can reproduce faithfully without sacrificing the scene’s natural feel. Best Camera Tips advises testing different metering modes and reviewing histograms to align camera output with eye-like brightness judgments.

Color processing and white balance: retinal interpretation vs sensor color science

Color in the eye emerges from complex retinal processing and brain interpretation, influenced by lighting context, surrounding colors, and memory. Cameras reproduce color through sensor color filters, demosaicing, white balance adjustments, and tonal mapping. The difference between camera and eye here lies in calibration and interpretation: the eye can adapt to lighting swings such as sunset warmth or fluorescent tint; a camera relies on white balance profiles and post-processing to align with human perception. By understanding these mechanisms, photographers can anticipate color shifts, shoot in RAW to retain flexibility, and apply color grading that respects the scene’s natural mood. The camera can simulate eye-witness color with careful white balance and color science, but it remains a synthetic interpretation rather than a neural construction.

Depth perception and field of view: stereo cues vs focal plane

Depth perception in human vision relies on stereo cues, motion parallax, and contextual cues to interpret distance. The camera conveys depth primarily through perspective, focal length, and depth of field, projecting a scene onto a flat sensor. The difference between camera and eye shows up in spatial judgment: the eye can adjust focus in real time while the camera fixes focal opportunities in a captured frame. Photographers counter this by choosing appropriate lens choices, spacing, and framing to guide the viewer through layers of depth. Eye-driven cues like motion parallax inform how you compose movement in a shot, while camera-based decisions define what remains sharp. Understanding this helps you craft images with a convincing sense of depth while preserving the viewer’s eye-trajectory through the frame.

Noise, resolution, and detail: retinal sampling vs sensor resolution

The retina samples the scene through a nonuniform mosaic, with high acuity in the fovea and lower sampling in the periphery. A camera captures detail according to sensor resolution, microlenses, and noise performance at various ISO levels. The difference between camera and eye becomes visible in low light: the eye uses neural processing to maintain perceived detail, while the camera struggles with grain and color shift unless you carefully manage ISO and exposure. For photographers, this means recognizing where your eye would expect detail and ensuring your camera resolution and noise performance meet those expectations. Post processing can help recover detail, but the core limitation lies in hardware and sensor design rather than perception alone.

Practical implications for photography: translating eye behavior into camera settings

Knowing how the eye processes scenes suggests practical camera habits. In bright scenes, you may favor higher shutter speeds and careful metering to preserve midtones, replicating the eye’s ability to avoid blown highlights. In shadows, you can leverage exposure compensation and RAW editing to retain detail, mirroring the eye’s sensitivity to darker regions when reading a scene. Focus decisions should balance eye fixation with subject priority, using depth of field to guide attention as your eye does naturally. Color grading should reflect eye-adapted perception, avoiding exaggerated color shifts that conflict with real-world viewing. The habit of testing how your camera handles a scene under varying lighting cues can help bridge the gap between eye perception and captured images, yielding more faithful representations of what you actually see.

Practical experiments you can run to compare camera and eye in real scenes

Run a simple paired test: photograph the same scene with two different exposure settings, then view the results while looking away and back. Compare how the eye perceives brightness and color versus the camera’s output. Repeat with moving subjects to observe autofocus behavior and eye tracking. Try a high-contrast scene and compare highlight retention with eye perception of the same moment. Document your observations and adjust exposure and white balance so the final image aligns more closely with what you observed visually. These exercises develop intuition for how the camera and eye interact and help you translate eye cues into repeatable camera actions.

Comparison

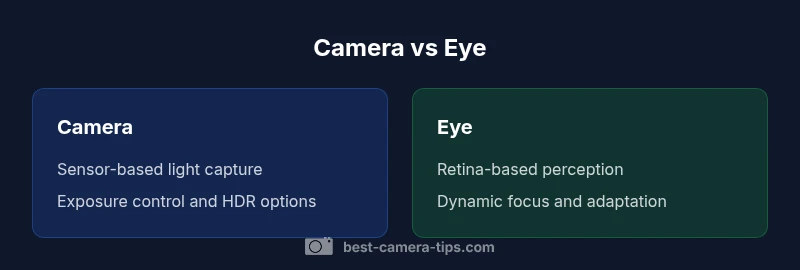

| Feature | Camera | Eye |

|---|---|---|

| Light capture mechanism | Sensor-based photon capture with ISO control | Retina-based photon detection with neural processing |

| Focusing system | Autofocus / manual focus via lens motors | Accommodation / vergence via lens shape and ciliary muscles |

| Dynamic range handling | Sensor dynamic range with exposure and HDR options | Biological dynamic range via neural adaptation |

| Color processing | Color filters, white balance, sensor demosaicing | Color perception via brain processing and sampling |

| Depth perception | Depth via depth of field and AF, parallax minimal | Depth via stereo cues and neural interpretation |

| Resolution | Pixel-based resolution; sensor size | Retinal sampling; foveal acuity vs periphery |

| Motion handling | Frame rate, rolling shutter, stabilization | Eye movement, saccades, motion tracking |

| Learning curve | Technique evolves with practice | Vision evolves with experience and context |

Positives

- Clarifies how hardware and biology differ in light capture

- Helps learners design better experiments and tests

- Supports intuitive analogies for beginners

- Encourages mindful camera settings aligned with human vision

Downsides

- Risk of oversimplifying biology

- Could imply one system is superior in all scenarios

- Requires careful framing to avoid misinterpretation

Camera and eye are complementary systems; optimize camera settings to align with how the eye perceives scenes.

Neither can fully replace the other. Understanding their differences improves both capture and viewing. Apply eye-inspired exposure and focus strategies, while relying on camera controls for repeatable results to achieve realistic, engaging photographs.

Common Questions

What is the main difference between camera and eye in image formation?

The camera forms an image by fixed optics and a sensor; the eye forms images via dynamic lens adjustments and neural interpretation. This combination of hardware and biology yields different strengths in exposure, color, and depth perception.

The camera uses sensors to capture light, while the eye relies on brain processing to interpret what you see.

Why do cameras struggle with dynamic range compared to the eye?

The eye adapts continuously using pupil changes and neural processing, whereas cameras have fixed sensor limits. You can mitigate this with exposure bracketing or HDR techniques, but the fundamental limit is sensor-based rather than perceptual.

Cameras can be pushed with HDR, but the eye naturally adapts on the fly.

Can cameras replicate color perception of the eye?

Cameras simulate color through sensor filters and white balance, not true perception. Color grading and RAW processing help bridge the gap, but the final look remains a crafted interpretation rather than natural perception.

Color in cameras is a crafted representation, not exact eye color perception.

How can knowledge of eye behavior improve photography?

Understanding eye behavior helps you anticipate motion, adjust exposure, and place emphasis in a frame. For example, you can pre-visualize how a viewer will scan a scene and set focus and contrast accordingly.

Think like the eye when setting exposure and focus to guide viewer attention.

What exercises can I run to compare camera and eye in scenes?

Set up scenes with high contrast and moving subjects, capture with your camera, then compare to your eye’s impression. Repeat with different lenses and lighting to learn how to adjust your camera setup to match eye perception.

Try side-by-side tests in varied light to see how the camera lines up with what you see.

The Essentials

- Compare light capture vs perception to guide exposure decisions

- Use depth of field to mimic eye focus in complex scenes

- Leverage dynamic range creatively with HDR or post-processing

- Study eye behavior to improve camera ergonomics and workflow

- Practice experiments to translate eye cues into camera actions