Is Camera the Same as the Human Eye? A Comparative Guide

Explore how cameras compare with the human eye, analyzing dynamic range, color, motion, and perception with an analytical lens from Best Camera Tips.

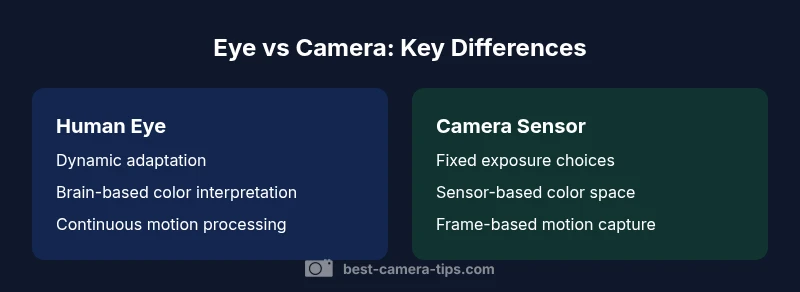

Short answer: is camera the same as human eye? No, but cameras mimic several eye-like functions. A camera translates light into electronic signals via a sensor and processing pipeline, while the eye relies on a biological retina and brain. Key differences include dynamic range, color interpretation, motion perception, and depth cues. Understanding these gaps helps photographers and home security professionals set realistic expectations and optimize gear and settings.

Is the camera the same as human eye? Foundations

When people ask whether the camera is the same as human eye, they are entering a comparison between two complex, adaptive systems. The camera is a manufactured instrument that translates photons into electronic signals, then into images through a sensor and processor. The human eye is a living organ that integrates light with neural circuits in real time. The first place to start is recognizing that the goal of both systems—high-quality vision—is the same, but the means differ profoundly. According to Best Camera Tips, the eye emphasizes continuous adaptation, depth cues, and intuitive perception shaped by experience, while the camera emphasizes consistent reproduction, color science, and tunable settings for specific scenes.

Both systems evolve across time: the eye adapts to brightness with pupil changes and neural gain; modern cameras adjust exposure, ISO, white balance, and tone curves. The analogy is useful for learning, but it's essential to avoid treating them as interchangeable. The keyword is calibration: each system benefits from calibration to perform optimally in a given context. In practice, this means understanding exposure, color grading, and lens choice for cameras, and recognizing how lighting, pupil size, and processing influence perception for the eye. The Best Camera Tips team emphasizes that learning these distinctions helps amateurs set realistic expectations and avoid misinterpreting images as a direct one-to-one copy of human sight. The rest of this article will unpack these differences in detail and show how to leverage both systems for better photography and smarter home surveillance.

Light capture and dynamic range: sensors vs retina

Dynamic range is a core difference between camera sensors and the human retina. A camera sensor is linear and limited by its architecture, noise floor, and signal processing pipeline. The retina, by contrast, performs local adaptation across its microstructures, giving the human eye a remarkable ability to preserve detail in both shadows and highlights under many conditions. When you compare is camera the same as human eye, you’ll notice that cameras rely on exposure control, HDR techniques, and sensor design to extend range, while the eye relies on successive fixation and brain-based compression to preserve detail across brightness steps. In practical terms, this means a well-tuned camera system can recover information in highlights that the eye might immediately perceive as blown out, but it may require post-processing to maintain natural tonality. Conversely, the eye can adapt on the fly for sudden brightness changes, maintaining a sense of scene balance even when light distribution shifts quickly. For home security cameras, this difference explains why modern security cams use local tone-mapping, infrared augmentation, and dual-exposure sensors to see clearly at night without washing out daylight. The core takeaway is that dynamic range is a product of both hardware and processing, not a simple attribute of one system alone, and Best Camera Tips emphasizes adjusting expectations accordingly.

Color processing and white balance: color science across systems

Color is more than a single measurement; it is a perceptual construct shaped by biology and context. The human eye perceives color through cone cells and neural interpretation that adapts with lighting and surrounding scenes. Cameras, by contrast, capture color through filters (like Bayer patterns) and rely on white balance, color profiles, and sensor engineering to render a faithful image. The eye can shift white balance mentally based on scene memory, while a camera hard-wires a color rendering that you adjust with in-camera settings or post-processing. The result is that color accuracy is a joint outcome of sensor design, lens characteristics, and processing choices. For aspiring photographers, understanding this helps with color grading workflows and choosing a color space that matches your workflow. For security installers, consistent color reproduction helps verify scene details across lighting changes. The Best Camera Tips approach is to treat color as a tunable parameter rather than a fixed truth, and to calibrate your equipment for consistent results across environments.

Spatial resolution, field of view, depth cues

The human eye provides a continuous field of view with peripheral awareness and depth cues like stereopsis and motion parallax, processed by the brain to create a coherent sense of space. A camera captures a finite scene through a lens, with resolution limited by sensor pixel density and lens sharpness. Depth on images is inferred from perspective, shading, and, in some cameras, depth-sensing features like stereo pairs or dual-focus systems. While the eye uses accommodation and brain interpretation to gauge distance, cameras rely on lens choice, perspective, and, sometimes, computational methods to convey depth. This difference matters for both photographers and home-security planners: wide-angle lenses can emphasize spatial context, while telephoto lenses compress depth. Practically, you should think about focal length, sensor size, and post-processing to reproduce or interpret depth cues in ways that align with your intent. The eye’s dynamic focus and the camera’s fixed optical system create complementary strengths when used together.

Temporal resolution, motion perception, autofocus

Temporal resolution relates to how quickly vision updates and how motion is perceived. The human eye continuously samples the scene with rapid attention shifts and adaptive gain, producing smooth motion perception. A camera operates in discrete frames per second and uses autofocus algorithms to lock onto subjects. This difference shows up in motion blur, rolling shutter artifacts, and the way motion is captured in sequences. For action photography, this means selecting faster shutter speeds, continuous autofocus, and appropriate frame rates to minimize blur. In security applications, understanding the camera’s processing pipeline helps explain why motion may appear choppy on some footage or why certain events are captured more clearly in IP cameras with higher frame rates. The takeaway is to align your camera settings with how you expect movement to be perceived, while recognizing the eye’s superior real-time motion integration.

Noise, sensitivity, and image quality under challenging lighting

In low light, the eye gathers light efficiently due to pupil dynamics and neural amplification, maintaining a usable scene by compensating with sensitivity and processing. Cameras rely on sensor performance at higher ISO, noise reduction algorithms, and exposure strategies to maintain image integrity. The difference often shows up as noise grain, color shifts, or subtle loss of shadow detail when pushing exposure in cameras. For photo enthusiasts, this emphasizes shooting in RAW when possible, using noise reduction thoughtfully, and choosing cameras with good high-ISO performance. For home security, it means balancing night vision features, infrared illumination, and sensor sensitivity to ensure dependable detail without excessive noise. The lens’ aperture and the sensor’s micro-lenses interact in complex ways to determine how much detail remains in the darkest areas.

Practical implications for photography and home security

A core insight from comparing eye and camera systems is that you should design your workflow around the strengths of each. In photography, use exposure bracketing, RAW capture, and color-managed pipelines to preserve scene realism and enable creative grading. In home security, prioritize consistent white balance, reliable autofocus, and correct exposure for mixed lighting—hallways, doorways, and exterior lighting all demand different calibrations. The human eye excels at scene understanding, while cameras provide repeatable measurements and documentation. By acknowledging both, you can shoot with intention and evaluate footage with a clear framework rather than relying on raw guesses. Best Camera Tips advocates building a practical checklist: set exposure targets, calibrate color, test under typical conditions, and review results from both real-world scenes and controlled tests.

Where the analogy breaks down and how to leverage both

The analogy between camera and eye is strongest for teaching concepts, but it breaks down when you require exact reproduction of perception. The camera cannot replicate the brain’s interpretive shortcuts or its own aesthetic preferences in real-time without processing. Conversely, the eye cannot produce a timestamped record with verifiable metadata such as exposure, ISO, or coordinates without external instrumentation. The smart approach is to use this knowledge to guide hardware choices and workflows. For photographers, treat the camera as a precise instrument for capturing light and color, then rely on post-processing to achieve your intended look. For security installers, think of your camera as a recording device whose strengths lie in consistency, night vision capabilities, and scene documentation. The synthesis of eye-like perception and camera precision yields the most reliable results when both are understood and used intentionally.

Comparison

| Feature | Human Eye (Biological Vision) | Camera Imaging System |

|---|---|---|

| Light sensitivity & dynamic range | Biological adaptation; pupil dynamics; brain gain control | Sensor exposure; HDR; tone-mapping; sensor noise floor |

| Color interpretation | Context-driven; brain white balance intuition | Sensor-filtered; color profiles; white balance adjustments |

| Depth & motion cues | Stereopsis, motion parallax, accommodation | Lenses, perspective, depth cues in software |

| Resolution & detail | Perceived resolution depends on brain integration | Pixel density; lens sharpness; sensor quality |

| Temporal behavior | Continuous real-time perception; motion interpretation | Frame-based; autofocus algorithms; possible artifacts |

| Calibration & consistency | Contextual interpretation; crowding effects | White balance, color profiles, calibration targets |

| Best use case | Immediate perception in varied lighting | Repeatable capture across scenes with controllable settings |

Positives

- Helps learners grasp how light, color, and motion differ in capture

- Guides camera selection and setting choices across scenes

- Improves expectations for post-processing and color grading

- Useful for evaluating image quality in both photography and security

Downsides

- Analogy can oversimplify biology and perception

- Color fidelity and depth cues differ in subtle ways and are hard to replicate

- Overreliance on camera tech may overlook eye comfort and scene understanding

Cameras are not the same as human eyes, but they complement human vision when used with proper knowledge.

The eye provides dynamic adaptation and context; cameras provide controllable, repeatable capture. Use both for best results.

Common Questions

Is camera like human eye in any sense?

Yes, both aim to capture scenes, but they operate differently. A camera is a device that records light digitally, while the eye and brain interpret light as a coherent scene. The analogy helps learning, but expect fundamental differences in processing, adaptation, and interpretation.

Yes, they share goals, but cameras and eyes work differently. Think of the camera as a recording tool and the eye as a perceiving, interpreting system.

Depth perception in cameras vs eyes?

Depth cues exist in both, but the mechanisms differ. The eye uses binocular vision and brain-based processing; cameras convey depth through perspective, lens choice, and, in some cases, depth-sensing tech. Perception of depth in photos arises from these cues rather than a live 3D sense.

Depth comes from perspective and lenses in photos, not from real-time 3D sensing like our eyes.

How does dynamic range differ?

The eye adapts dynamically to light and uses neural processing to balance brightness. Cameras rely on sensor capabilities, exposure control, and post-processing to extend range. In scenes with extreme contrast, HDR can help, but the result is not identical to natural vision.

Dynamic range in cameras comes from processing; the eye handles it more fluidly with pupil changes and brain adaptation.

Do cameras perceive color the same as eyes?

Not exactly. The eye interprets color with brain context and lighting adaptation; cameras encode color via sensors and color profiles. Consistent color requires careful white balance and calibration across devices.

Color on cameras is built from sensors and settings; your eyes adjust automatically based on lighting.

How should I adapt for security camera use?

For security cameras, prioritize reliable exposure, night vision options, and consistent color rendering. Use proper lighting, configure dynamic range features, and verify footage under typical conditions to ensure usable details when it matters most.

For cameras, set up reliable exposure and lighting so footage remains clear in real situations.

The Essentials

- Analyze exposure to understand brightness perception

- Leverage RAW and HDR to bridge dynamic range gaps

- Calibrate color with white balance and profiles

- Choose lenses for accurate depth and perspective

- Study motion handling and processing to interpret footage