Difference Between a Camera and a Human Eye: A Comprehensive Comparison

Explore the difference between a camera and a human eye, including light capture, processing, color, and perception, with practical insights for photography and security applications.

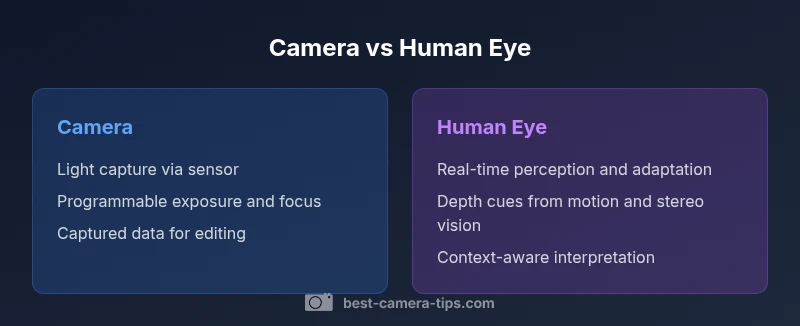

At a high level, the difference between a camera and a human eye lies in control and perception. A camera captures light with a sensor and records discrete frames, while the eye and brain continuously interpret scenes in real time. Cameras excel in measurable control—aperture, shutter, and ISO—whereas human vision adapts instantly to brightness, movement, and context without conscious tuning. The result is that cameras excel in repeatable captures, whereas eye-brain vision excels in real-time situational awareness.

What this comparison reveals about perception and capture

The difference between a camera and a human eye shapes how photographers plan, shoot, and interpret scenes. According to Best Camera Tips, understanding these contrasts helps translate natural perception into practical techniques. In practice, a camera is a device that translates photons into an editable digital record, while the eye is a biological sensor that drives rapid, context-rich interpretation. This distinction matters not just for gear, but for how an image feels when you view it.

To appreciate the gap, consider two dimensions: physical capture and cognitive interpretation. A camera records light via a fixed sensor array and color filter array, producing data that can be stored, adjusted, or transmitted. The human eye system, by contrast, relies on rods, cones, and neural processing to create a seamless, dynamic view where exposure, focus, and brightness adjust on the fly. In practice, this means a camera may require longer setup or post-processing, while the eye adapts instantly to glare, shadows, and motion. Understanding this helps you decide when to accept an automated shot, and when to intervene with lighting, exposure compensation, or focal adjustments. The result is that cameras excel in repeatable captures, whereas eye-brain vision excels in real-time situational awareness.

mainTopicQueryAddedForIndexingIncludedInBlock1ButtonNoteOnlyIfNeededForWikidataInterfaceOfThisBlockType?null: null.

Comparison

| Feature | Digital Camera | Human Visual System |

|---|---|---|

| Light capture | Sensor-based photon capture with fixed exposure | Biological retina with rods/cones and neural amplification |

| Dynamic range | Hardware-limited, expandable with processing as needed | Adaptive through pupil and brain processing |

| Color processing | Color sensors with white balance; predefined color spaces | Neurological color interpretation influenced by context |

| Focus and depth cues | Autofocus and manual focus with depth-of-field control | Depth perception from stereopsis and motion parallax |

| Motion handling | Frame-based capture; shutter timing controls motion | Continuous perception with temporal integration |

| Noise and artifacts | Sensor noise and compression artifacts | Neural processing limits perceptual noise |

| Learning and adaptation | Programmable settings and post-processing workflows | Experience-based learning and context-aware interpretation |

Positives

- Cameras provide repeatable, adjustable results with precise control over exposure and focus

- Human vision offers real-time perception and automatic adaptation to scene changes

- Cameras enable archival recording and post-processing for flexibility

- Understanding both systems helps you design better workflows and gear choices

- Hybrid use—shoot with a camera, interpret with human vision—often yields best results

Downsides

- Cameras require power and maintenance, and can introduce processing artifacts

- Eye perception can be unreliable for exact measurements without calibration

- Overreliance on camera automation may reduce situational awareness in dynamic environments

- Some comparisons risk oversimplifying vision science without practical demonstrations

Cameras and the human eye excel in different domains; use the camera for controlled capture and documentation, and rely on human vision for real-time interpretation and decision-making.

The camera offers repeatable, tunable results suitable for analysis and sharing, while the eye-brain system provides fluid perception and rapid adaptation. Recognizing their strengths helps photographers and security professionals choose methods and tools that align with their goals.

Common Questions

What is the key difference between a camera and the human eye?

The key difference lies in control versus interpretation: cameras capture light via sensors with programmable settings, while the eye-brain system continuously interprets light with automatic adaptation. This leads to cameras offering repeatable results and post-processing flexibility, while human vision provides dynamic perception and immediate context.

Camera control and eye perception work differently—one is programmable, the other adaptive in real time.

Which has better dynamic range, cameras or eyes?

Cameras often push higher dynamic range through sensors and processing, enabling detail in highlights and shadows. The eye-brain system adapts in real time, which can make it appear more dynamic in changing light. Each system has strengths depending on the scene and technique used.

Cameras can push dynamic range, while the eye adapts on the fly.

Can cameras replicate human color perception exactly?

Cameras simulate color through sensors, color spaces, and white balance, but human color perception is influenced by context and adaptation. While color science can approximate perception, it is not a perfect one-to-one match. Post-processing can help align images with how the viewer might perceive color.

Colors on camera can resemble perception, but aren’t identical to what the eye sees.

How do focusing and depth cues differ?

Cameras rely on autofocus systems and depth-of-field control to manage focus. Humans use stereo vision and motion parallax to gauge depth in real time. The result is that cameras can lock focus precisely, but the eye can naturally perceive depth in a fluid scene.

Autofocus vs natural depth cues—two different tools for depth.

What practical tips help beginners compare camera results to eye perception?

Practice comparing your shots to what you remember seeing with your eyes. Use exposure compensation for tricky lighting, bracket when unsure, and review color accuracy with neutral targets. Over time, you’ll learn when the camera’s output aligns with your eye-based impression.

Try comparing shots to memory of the scene and adjust exposures and colors accordingly.

Why study both systems when learning photography or security setup?

Because each system exposes different limits and opportunities. Understanding both helps you plan captures that the camera records faithfully while the viewer experiences the scene as intended. This dual knowledge informs lens choice, lighting, and processing decisions.

Knowing both helps you plan smarter shoots and better setups.

The Essentials

- Identify where cameras excel—control, repeatability, and post-processing.

- Leverage human vision for real-time interpretation and scene awareness.

- Balance automation with manual tweaks to emulate eye-like adaptability.

- Study both systems to design better shoots and smarter security setups.