Camera vs LiDAR: Is Camera Better Than LiDAR? A Practical Comparison

Compare cameras and LiDAR to decide which sensor fits your needs. Learn about depth, resolution, lighting, and deployment considerations for photography, security, and autonomous sensing.

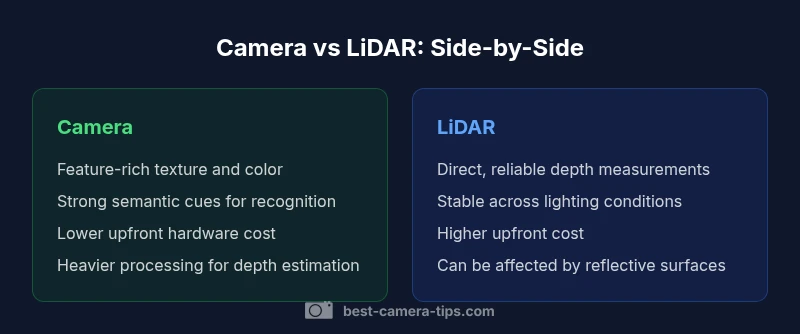

Is camera better than lidar? In many contexts, the answer is nuanced: cameras excel at texture, color, and high-resolution detail, while LiDAR provides precise depth measurements and robust ranging. For most mixed-use applications, the better choice depends on environment, required accuracy, and processing resources. This comparison outlines strengths, weaknesses, and practical decision criteria to help you decide which sensor fits your goals.

The Sensor Landscape: Cameras vs LiDAR

In the field of perception, is camera better than lidar is not a binary call but a question of intended use, environment, and resources. According to Best Camera Tips, cameras offer rich texture, color, and contextual cues that help humans recognize scenes and objects, while LiDAR delivers direct, reliable depth information that remains stable across lighting changes. For photographers, security installers, and developers, this distinction shapes your design choices from the start. Recognizing where texture matters most versus where precise geometry is non-negotiable helps you avoid overbuying or underutilizing a sensor. This section frames the tradeoffs and sets the stage for a practical decision framework grounded in real-world needs.

Core Technical Differences

Cameras and LiDAR operate on different physical principles. A camera captures reflected light to form a 2D color image, relying on texture, contrast, and color for interpretation. LiDAR, by contrast, emits pulses and measures return times to produce a 3D point cloud that maps geometry with direct depth data. These fundamental differences drive how each sensor handles clutter, motion, and occlusions. When planning a system, you should map your goals to these data properties: texture and semantics versus precise 3D structure. This understanding helps avoid relying on a single modality to cover all use cases.

Resolution, Range, and Depth: What Each Sensor Delivers

Resolution and depth are core attributes that determine how well a sensor supports a given task. Cameras deliver high-resolution textures and color information, enabling fine-grained recognition and scene understanding. LiDAR provides direct depth measurements that enable accurate 3D positioning and mapping. The depth data from LiDAR is particularly valuable for obstacle detection, spatial reasoning, and 3D reconstruction. However, depth from camera data typically requires robust algorithms, such as stereo matching or depth-from-motion, which can introduce estimation errors in textureless scenes. A practical takeaway: expect richer appearance from cameras and more certain geometry from LiDAR.

Performance under Varying Lighting and Weather

Lighting conditions strongly influence camera performance. In dim or harsh lighting, camera quality degrades, color fidelity worsens, and depth perception relies on advanced software. LiDAR systems are less sensitive to ambient light, offering more consistent depth perception across night or shadowy scenes. Weather introduces its own challenges: heavy rain, fog, or snow can disrupt LiDAR returns or reduce signal quality, while cameras may struggle with glare, haze, or motion blur. For a mixed environment, designers often plan for sensor fusion to maintain performance when one modality struggles.

Use-Case Profiles: When a Camera Shines vs When LiDAR Excels

If your primary goal is texture-rich classification, scene understanding, or aesthetic capture, a camera leads the way. For tasks requiring robust depth and accurate 3D geometry—especially in cluttered or low-contrast settings—LiDAR excels. In many applications, the best solution is not choosing one over the other but using both: cameras provide semantic context while LiDAR anchors perception with precise geometry. In practice, teams often start with a camera-centric setup and add LiDAR as a depth-dedicated supplement.

Data Handling, Processing, and Fusion Possibilities

Camera data demands heavy computation for feature extraction, scene understanding, and depth estimation. LiDAR produces dense geometry data but can still require significant processing for noise filtering and fusion. Sensor fusion combines the strengths of both modalities, enabling robust perception across diverse scenes. When planning fusion, consider data alignment, synchronization, and bandwidth. Practically, early-stage systems may run lightweight fusion to reduce latency, then upscale processing as needs evolve. Best Camera Tips recommends a staged approach: prototype with one sensor, then incorporate the second to evaluate gains in accuracy and reliability.

Cost, Availability, and Lifecycle Considerations

Hardware cost remains a crucial factor: cameras typically cost less upfront and benefit from mature ecosystems, while LiDAR adds a premium for depth sensing hardware. Availability and form factor vary: cameras come in many shapes and sizes, with wide support across platforms. LiDAR options range from compact chips to full scanners, with capabilities that impact integration time and maintenance. Lifecycle considerations include calibration needs, weather-proofing, and software updates. Based on Best Camera Tips Analysis, 2026, planning a scalable upgrade path helps avoid early obsolescence and ensures long-term value.

Integration and Deployment Realities

Deploying a mixed-sensor perception system introduces challenges in synchronization, calibration, and data fusion pipelines. Time alignment between camera frames and LiDAR scans is essential; misalignment can erode advantages. Calibration drift over time requires maintenance routines. Integration with existing hardware and software stacks determines overall feasibility. A thoughtful deployment strategy prioritizes reliable data pipelines, fault tolerance, and clear performance targets. Real-world projects emphasize robust testing under diverse conditions to reveal failure modes early.

Security, Privacy, and Compliance Implications

Cameras raise privacy concerns due to visual data capture, while LiDAR generally has fewer privacy issues since it does not capture identifiable imagery. Compliance considerations include data governance, retention policies, and access control for sensor data. When designing public-facing systems, implement privacy-preserving processing, such as on-device inference and data minimization. Transparent user communication about data collection and usage helps align with regulatory frameworks and user expectations.

The Future: Trends in Cameras, LiDAR, and Sensor Fusion

Expect continued improvements in camera resolution, dynamic range, and semantic understanding, paired with LiDAR that becomes more compact, affordable, and robust to adverse weather. Sensor fusion will likely become the standard approach for autonomous navigation, robotics, and advanced security systems, offering redundancy and resilience. Industry shifts emphasize real-time processing efficiency, edge computing, and standardized data formats to simplify integration. Staying current with these trends helps practitioners future-proof their deployments.

Practical Decision Framework: A Step-by-Step Checklist

- Define the primary task: texture/semantic understanding versus precise depth. 2) Assess the operating environment: lighting, weather, clutter, and ranges. 3) Estimate available processing power and budget. 4) Consider a staged approach: start with one sensor, evaluate performance, then add the second for depth. 5) Plan for fusion and calibration strategies, including data alignment and timing. 6) Review privacy and security requirements. 7) Outline a maintenance and upgrade path for sustainability.

Common Pitfalls and Myths Debunked

Myth: Cameras alone are enough for all perception tasks. Reality: texture helps but depth accuracy may require LiDAR or fusion. Myth: LiDAR makes cameras obsolete in all scenarios. Reality: depth is valuable, but image-based semantics remain essential for robust recognition. Myth: Fusion always adds latency. Reality: optimized pipelines can reduce latency while increasing reliability. The key is to test under real-world conditions and maintain a clear objective for sensor requirements.

Comparison

| Feature | Camera | LiDAR |

|---|---|---|

| Resolution/Texture & Detail | High texture and color fidelity | Direct depth with geometry, limited texture |

| Depth Sensing | Depth inferred via algorithms (stereo/monocular) and semantics | Direct, geometric depth from laser pulses |

| Low-light & Weather | Depends on lighting; performance degrades in dark scenes | Relatively stable across lighting; weather effects vary by material |

| Range & Coverage | Broad range of textures across distance with optical lens | Long-range geometry with consistent returns |

| Cost & Availability | Lower upfront cost, wide ecosystem | Higher upfront cost, specialized devices |

| Processing Demands | Heavy AI/ML workloads for depth and semantics | Point-cloud processing but often fewer frames per second |

| Durability & Versatility | Sensitive to glare, motion blur, occlusion | Robust to lighting; weather-dependent in outdoor use |

| Best For | Texture-rich recognition, scene understanding | Direct 3D mapping and reliable depth in varied conditions |

Positives

- Cameras unlock rich texture, color, and semantic cues

- Cameras are generally cheaper and widely available

- LiDAR provides robust depth data and reliable geometry

- Sensor fusion leverages strengths of both for robust perception

Downsides

- Cameras rely on lighting and texture; performance drops in poor light

- LiDAR is more expensive and adds system complexity

- Camera-based depth estimation can be computation-heavy and latency-prone

- LiDAR data can be sparse on reflective surfaces and in certain weather

Sensor fusion is the recommended approach for most use cases.

Cameras deliver texture and context; LiDAR delivers precise depth. Combining both mitigates individual weaknesses and provides robust perception across environments.

Common Questions

What are the key advantages of cameras over LiDAR?

Cameras provide rich texture, color, and semantic cues that support recognition and labeling. They are also cheaper and have broad ecosystem support. Depth is indirect and relies on algorithms, which can introduce errors in feature-poor scenes.

Cameras give you color and detail, and they’re cheap, but depth isn’t direct and needs computer vision trickery.

What are the main strengths of LiDAR compared to cameras?

LiDAR delivers direct depth measurements and robust 3D geometry, often independent of lighting. It helps with accurate object localization and dense mapping, especially in low-contrast or poor-light environments.

LiDAR provides direct depth and solid 3D geometry, even when light isn’t ideal.

Can I rely on a single sensor, or should I fuse?

Single sensors can perform specific tasks, but fusion generally improves reliability and reduces failure modes. Start with one sensor, then add the other if the task demands deeper perception and resilience.

Usually best to fuse for reliability; start with one sensor and add the other as needed.

How does weather affect cameras vs LiDAR?

Cameras suffer in rain, fog, or strong glare, and their depth estimates rely on software. LiDAR is less sensitive to ambient light but weather like heavy rain or fog can still degrade returns depending on the wavelength and surface.

Cameras struggle with rain and fog; LiDAR handles light better but weather can still affect returns.

What costs should I consider when choosing?

Hardware cost is a major factor: cameras are cheaper, LiDAR adds upfront expense. You should also factor in processing power, bandwidth, calibration, and maintenance over the system’s lifecycle.

Think about upfront hardware, processing needs, and ongoing maintenance.

Is there a standard industry recommendation?

There is no universal standard; many deployments rely on sensor fusion to balance strengths and mitigate weaknesses. Your best practice is to tailor choices to task requirements and environmental conditions.

No universal standard—fusion is common to balance strengths.

The Essentials

- Define your priority: texture vs depth

- Consider sensor fusion for reliability

- Budget and processing power drive the choice

- Lighting matters for cameras; weather impacts both

- Plan for calibration, maintenance, and future upgrades